Table of Contents

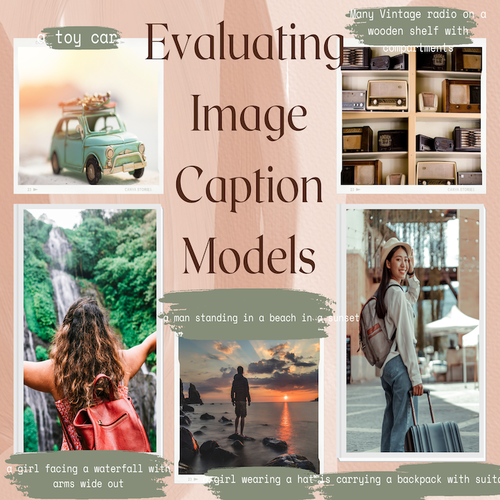

This project is a comparative study of Image caption generation model . This experiment aims to provide:

- A detailed breakdown of the architectures and mechanisms of ViT-GPT2, BLIP, and GIT.

- A quantitative and qualitative analysis of their performance.

- Insights into the strengths, limitations, and suitability of each model for real-world applications.

The dataset utilized for this study consists of 600 images, sourced exclusively from open-access platforms to ensure accessibility and reproducibility. Each image was meticulously self-annotated with high-quality captions to create a reliable ground truth for evaluating the models’ performance.

Visit the Project on GitHub View data in KaggleDataset Composition

- Animals - Includes various species in diverse settings, such as wildlife, pets, and zoos.

- Humans - Depicts people in natural environments, performing activities, and interacting with objects.

- Architecture - Captures man-made structures, including buildings, bridges, and urban landscapes.

- Natural Formations and Nature - Covers landscapes, forests, mountains, rivers, and other natural scenes.

- Everyday Objects - Features commonly found objects, such as tools, household items, and vehicles.

The data that we collected have been uploaded to Kaggle. Please check them out here.

Dataset Challenges

- Diversity of Visual Content: Ensuring the dataset captures a wide variety of visual scenes and objects for generalizability.

- Annotation Quality: Maintaining consistency in style and accuracy across all annotated captions.

- Ambiguity: Handling images with multiple possible interpretations, where different valid captions could describe the same image.

Sample Image and Prompt

- Custom Annotation - Man attempting a slam dunk

- vit-gpt2 - a woman jumping in the air to catch a frisbee

- blip-conditional - a photography of a basketball player jumping to the basket

- blip-unconditional - a man jumping in the air with a basketball

- git - a young man playing basketball in a gym

Models used for caption generation

The models that were used for caption generation are:

The folder captions in the repository contains all groundtruth captions as well as the model generated captions.

The annotations can be viewed in this sheet or in all_captions.csv file.

We used three metrics for our comparative study:

- METEOR

- BLEU-1

- BLEU-2

Results

The results are calculated in score.ipynb notebook.The table and the graphs obtained from the study is shown below:

| Model | METEOR | BLEU-1 | BLEU-2 |

|---|---|---|---|

| ViT-GPT2 | 0.1644 | 0.1845 | 0.0816 |

| GIT | 0.2207 | 0.2301 | 0.117 |

| BLIP (Conditional) | 0.2418 | 0.2357 | 0.1246 |

| BLIP (Unconditional) | 0.2426 | 0.2555 | 0.1327 |

Quantitative Results and Qualitative Analysis

The quantitative results are :

- BLIP (Unconditional Mode) achieved the highest scores across all metrics (METEOR: 0.2426, BLEU-1: 0.2555, BLEU-2: 0.1327).

- BLIP (Conditional Mode) closely followed, showing slight improvements in guided captioning (METEOR: 0.2418, BLEU-1: 0.2357, BLEU-2: 0.1246).

- GIT demonstrated a balanced performance (METEOR: 0.2207, BLEU-1: 0.2301, BLEU-2: 0.1170).

- ViT-GPT2 performed the weakest, struggling with visual-text alignment (METEOR: 0.1644, BLEU-1: 0.1845, BLEU-2: 0.0816).

The qualitative analysis that we made are:

- BLIP models generated semantically rich and contextually accurate captions.

- GIT provided coherent but sometimes generic captions.

- ViT-GPT2 struggled with misidentification and irrelevant outputs.

Model Strengths and Weakness

The strength are as follows:

- BLIP’s Dual Mode (Conditional/Unconditional) allowed better flexibility in caption generation.

- GIT’s unified transformer architecture helped in balancing vision-language processing.

- ViT-GPT2’s modularity enabled adaptability in vision and text alignment.

The weakness are as follows:

- BLIP required significant computational resources.

- GIT lacked interpretability due to its tightly coupled vision-language representation.

- ViT-GPT2 frequently misidentified objects and actions, leading to less reliable captions.

Evaluation Metrics

- METEOR captured semantic accuracy.

- BLEU-1 and BLEU-2 measured word precision and phrase coherence.

- Other advanced metrics (CIDEr, ROUGE-L, SPICE) were not included, limiting evaluation depth.

Limitations

- Small dataset size (600 images) reduced statistical reliability.

- Lack of advanced evaluation metrics affected a deeper analysis.

- Real-world applicability was not tested, limiting practical insights.

Combined METEOR for models tested

Combined BLEU-1 for models tested

Combined BLEU-2 for models tested

Visit the Project on GitHub View data in Kaggle